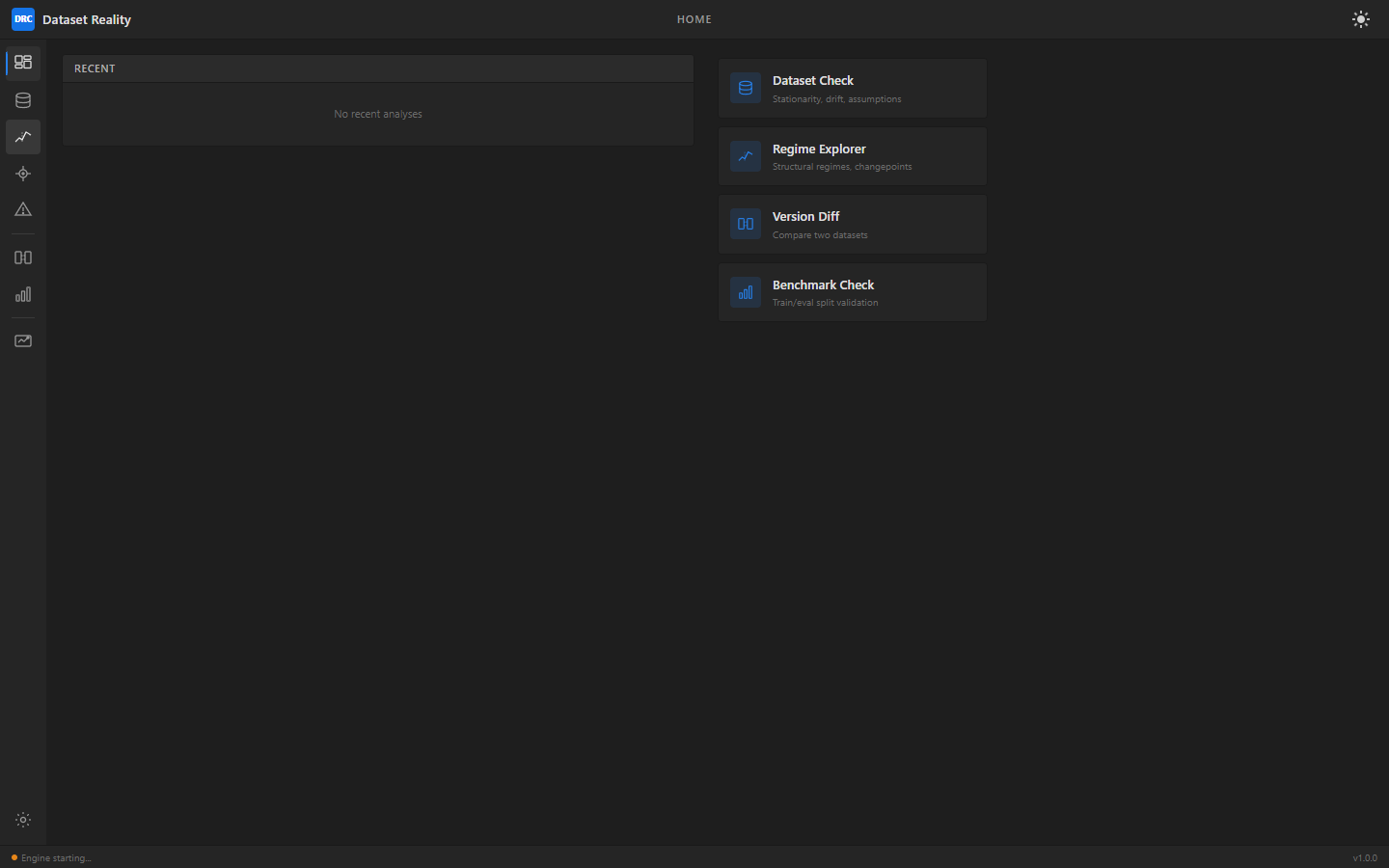

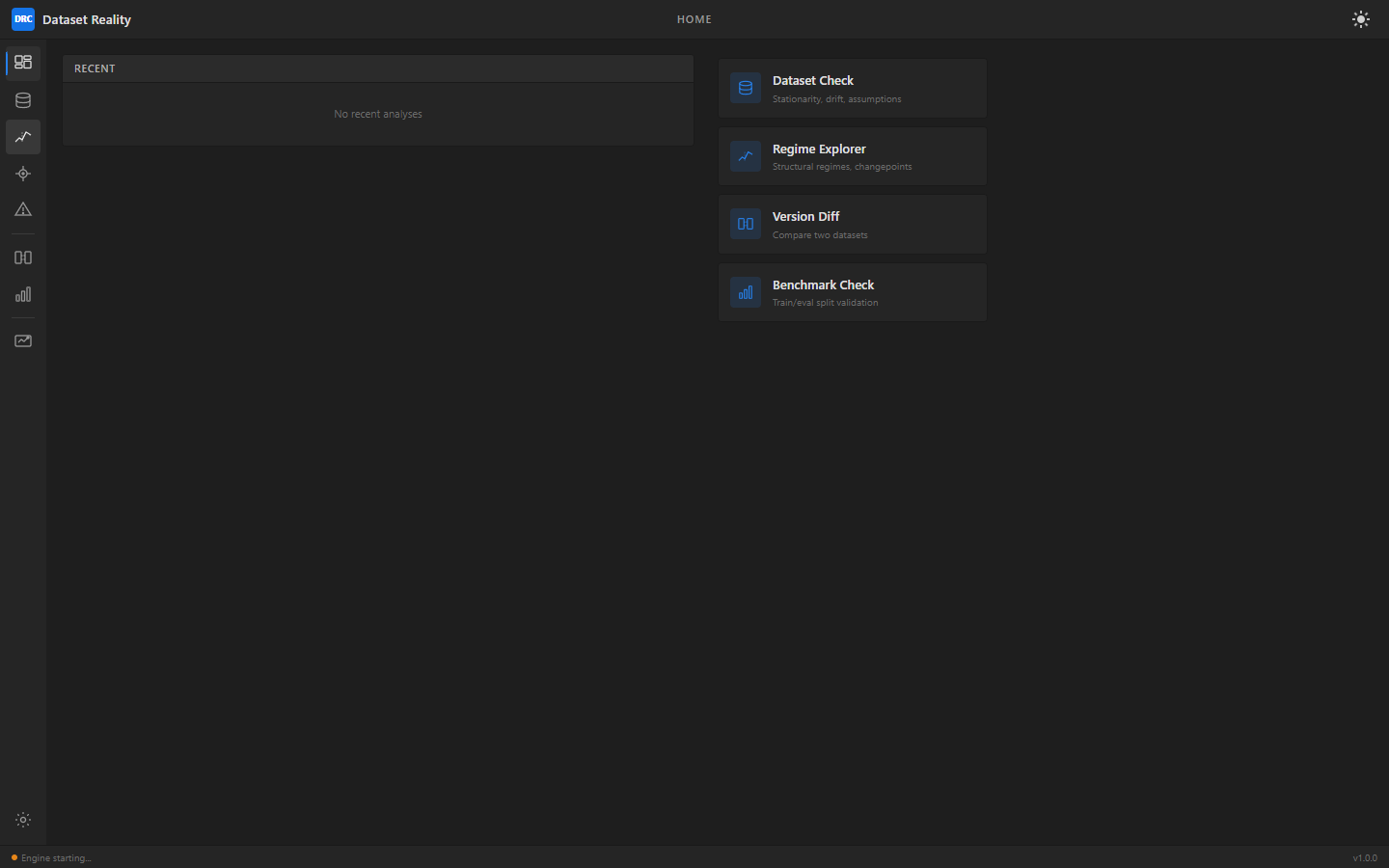

Know Your Data

Before You Train

Custom-built AI stack focused on downside risk and failure prevention, not generic no-code automation. The engine does not depend on retrieval patching for memory. It adapts to validated new information in under a minute and commits only what passes a five-point source-truth gate.

Dataset Reality Check analyzes dataset quality before model training. Detect drift, regime shifts, feature fragility, and assumption violations so you don't waste weeks training on broken data.

Supports CSV, TSV, and Parquet · Up to 500 MB per file · Non-regulated uploads only for hosted launch